In this post, we are going to show you a few examples about SSD (object detection) and object tracking and discuss a question: “Is SSD able to do object tracking?”. If no, what may possibly cause its failing? We compare the detection result with and without using tracking. Hope we can give you some intuitions on both.

Introduction

In our previous post, we showed that SSD is an effective algorithm for object detection. For videos, the detection is done frame by frame so theoretically, we are able to know each object’s location even after N frames as long as the object can be detected. Therefore, we are able obtain the trajectory of each object, from which the movement of an object can be inferred, which is something we are interested in.

Tracking, however, is an algorithm by which if we define a Region of Interest (ROI), this ROI can be precisely localized in the following frames. Here is a nice introduction of several trackers in OpenCV, which you can try immediately.

Comparison

Intuitively, SSD seems to do the detection task and tracking takes its outcome and keeps tracking the objects. But can we eliminate tracking and have everything done by SSD? Let’ have a look at the following examples. The blue bounding boxes(bbox) show the detection result of SSD. After detected, tracking is triggered manually at a random time, as shown in red bbox on the right.

Left: SSD only

Right: SSD + Tracking

Example 1: Good video quality + non-fast motions

There are some detection failure on some objects but let’s ignore it for the moment and focus on the champion runner (track No.7). The detection is successful on nearly every frame and so is tracking. There is hardly any difference between left and right because the objects(human) are running with repeated pose. Once detected, it is fairly easy for SSD to detect it again in the following frame as the movement is as big as its previous one. Tracking is also working pretty well.

Example 2: Good video quality + fast motion

For this example, the angle of the camera changed gradually so as the runners’ poses. It can be observed that for some particular frames, the detection accuracy is low so that no bounding box is drawn. It can be inferred that those poses of the runners must show rarely in the training dataset. Meanwhile, the tracking still works smoothly, predicting precise bboxes.

Example 3: Good video quality + non-fast motions+ bird-view camera

In this example, the video quality is the same as Example 1 but with a bird-view camera. I bet human viewed from this angle must be really a minority in the original dataset. SSD is no longer able to detect the runners. But still, the tracking on the right works well as we expected.

Example 4: Bad video quality + fast motions

Let’s have a look at a worse case, where the video is blurry, out of focus, making it even more difficult for SSD detection. Tracking, however, succeeded in the beginning but shifted to another similar target when those two overlapped. Compared with other examples, the whole tracking seems a bit unstable.

Here is a video containing all the above examples in a larger size.

Tracking Algorithm

After watching the above examples, you may be interested in the algorithm. The one used in the above examples is a CNN-based network shown as follows:

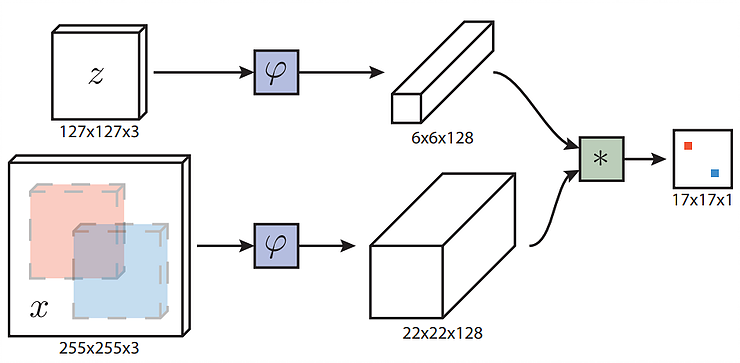

Paper: Fully-Convolutional Siamese Networks for Object Tracking

Implementation: A Tensorflow Implementation

Highlights: Real-time tracking in GPU

Architecture Introduction: In the above diagram, “z” refers to the target template (i.e. The object for tracking) and “x” is the search region (i.e. One of the subsequent frames where the target object exists). Basically, to track an object equals to find the region in “x” that has the biggest similarity as “z”. In order to achieve this, both “z” and “x” are fed into “φ”, which maps the primitive images to their feature spaces, as shown in the rectangular cubes, 6x6x128 and 22x22x128, respectively. According to the paper, “φ” is two networks with the same structure (conv+pool layers) but with different dimensions. The following asterisk “*” is a convolution operation. 6x6x128 feature map is used as the convolution kernel and applied to 22x22x128 feature map, producing the final output 17x17x1, which is a score map representing the similarity between “z” and each position in “x”. On the score map, the biggest value indicates the center of the region in “x” that resembles “z” most.

Discussions

-

Object movements

As shown in the above examples, fast motions may cause the failure of SSD detection, which then causes a gap between detected frames. The longer this gap lasts, the harder it is to obtain a precise trajectory. But this does not affect tracking that much as long as the frame interval is short. -

Video quality

It affects both, especially SSD. We know that detection equals “to find the adequate information about a target object”. To keep this process stable, it is desirable to keep the image information as close as the raw input. Anything such as video compression, out of focus, or subsampling, would be harmful to the detection precision and tracking stability. Therefore, if your solution involves a sequence of different Computer Vision algorithms, make sure you use an input image/video with the highest quality, otherwise, you will end up with endless trouble caused by the bad quality.

Conclusion

So, back to our initial question: “Is SSD able to do object tracking?” We think it depends on the following factors:

- Is your camera in a non-fixed place (For example, dash-cam inside an automobile)

- Does the object have fast motions?

- Does the trajectory need to be as precise as possible?

If all yes, you probably need to take advantage of a tracking algorithm after SSD detection. But if you only need to track a car nearby, as shown here, maybe SSD would just fit, as cars are not as unpredictable as human gestures. Additionally, some tracking algorithm (including one introduced in this post) does not take object overlapping (see Example4) into account, in which case, it is more likely to lose target. Make sure this is not a critical issue in your application before adopting this tracking algorithm.